vocab.txt · nvidia/megatron-bert-cased-345m at main

Por um escritor misterioso

Descrição

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

GitHub - ProjectD-AI/LLaMA-Megatron-DeepSpeed: Ongoing research training transformer language models at scale, including: BERT & GPT-2

BERT Transformers — How Do They Work?, by James Montantes

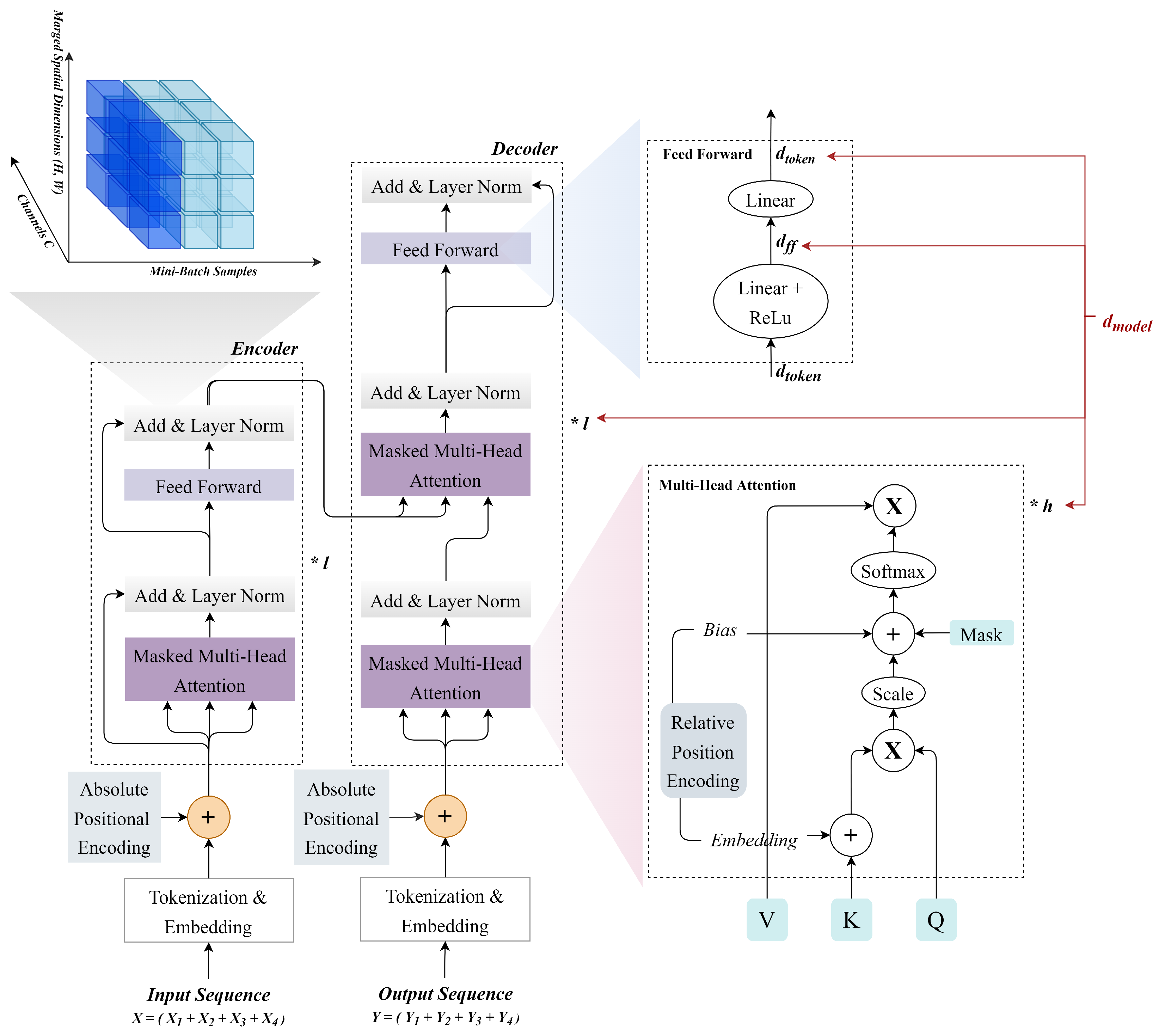

zhangy03/Hybrid-Parallel-Transformer-pytorch: 基于transformer架构包含中英文的bert、gpt、T5模型的算法库 - README.md at master - Hybrid-Parallel-Transformer-pytorch - OpenI - 启智AI开源社区提供普惠算力!

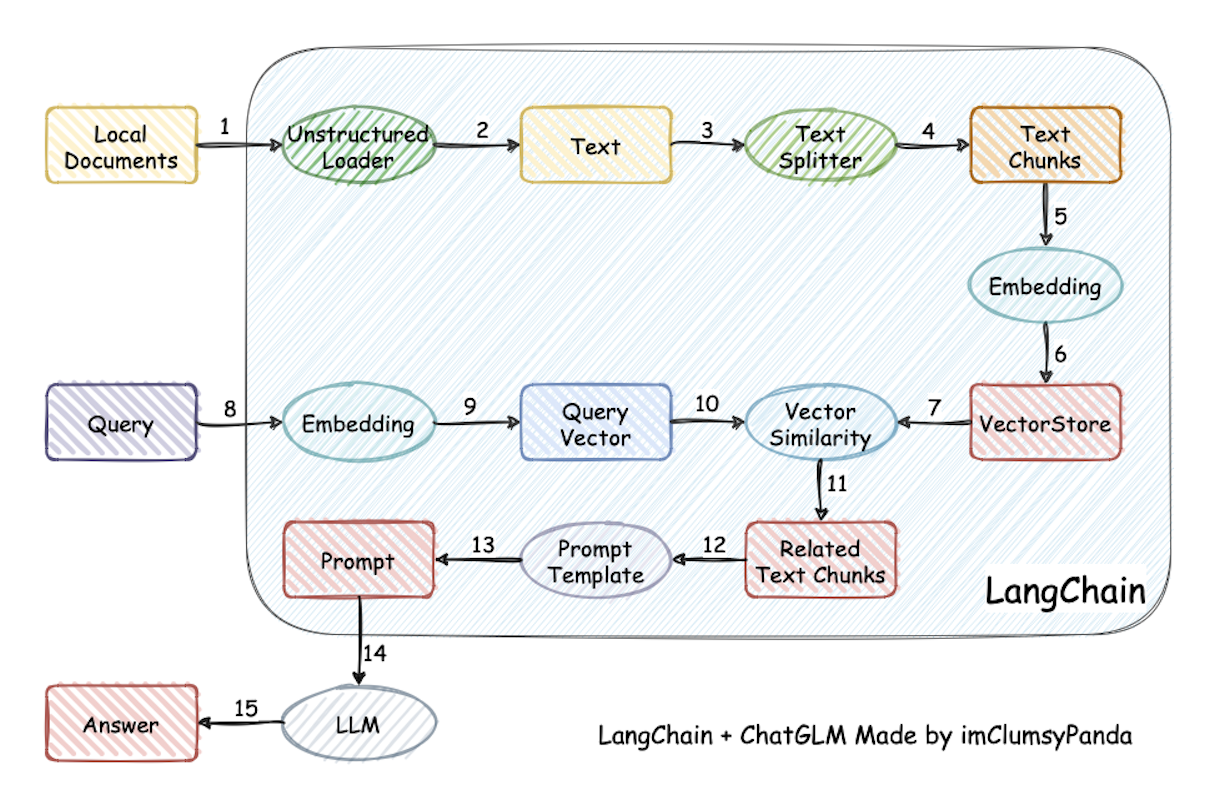

Mastering LLM Techniques: Training

GitHub - EleutherAI/megatron-3d

AI, Free Full-Text

A survey on text classification: Practical perspectives on the Italian language. - Abstract - Europe PMC

AMMU: A survey of transformer-based biomedical pretrained language models - ScienceDirect

Feedgrid

de

por adulto (o preço varia de acordo com o tamanho do grupo)